PyFlink In 2026: Better Than Its Reputation, Still Not Frictionless

Follow ML-Affairs On LinkedIn

Open the ML-Affairs page and follow it directly, or use the LinkedIn widget below.

I do not think teams reach for PyFlink because Python feels nicer to type.

They reach for it when they have already paid the cost of splitting one ML system across two ecosystems.

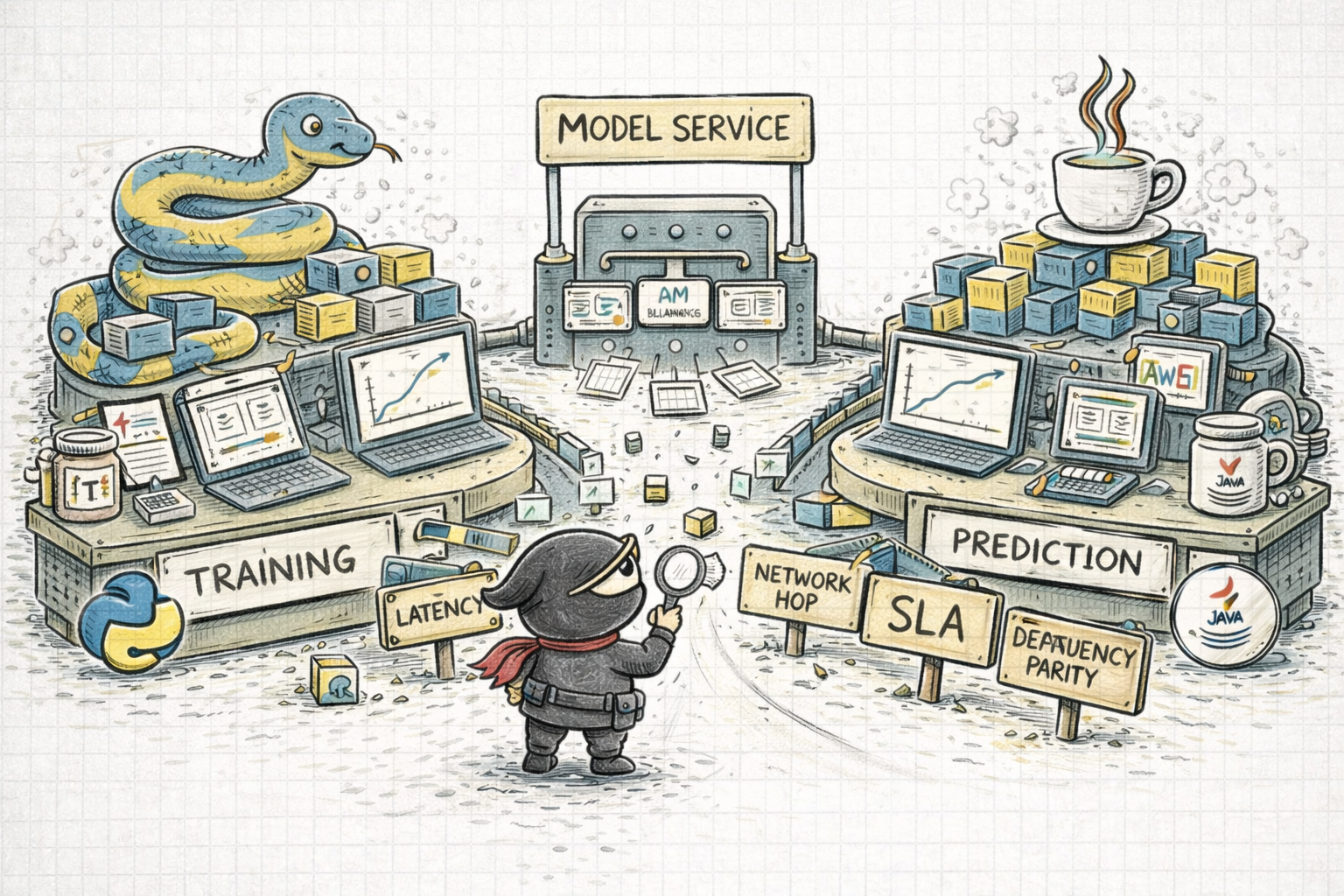

I have seen that pain in the most annoying way possible: training and experimentation lived in Python, but the prediction path had to live in Java. On paper that sounds manageable. In practice it meant subtle differences in floating-point behavior, parsing choices, and even heading-angle calculations were enough to create inconsistent predictions. We lost months chasing what looked like model problems and turned out to be feature mismatches.

That is the part many architecture discussions understate. Once training is in Python and prediction is in Java, the real problem is no longer just inference. It becomes feature parity, interface parity, and the feedback loop between two runtimes that each have their own libraries, their own defaults, and their own ways of being almost the same.

You can try to escape that with ONNX. You can rebuild parts of the feature logic in Java. You can expose the model behind a service boundary and call it remotely. All of these are reasonable patterns. None of them are free.

Four years ago, ONNX was not mature enough for the kinds of models and custom ops we cared about. The easy story broke precisely where real systems stop being toy examples. The fallback was the pattern most teams know well: deploy the model as a service and call it over REST. That works, but now your prediction pipeline owns an extra network hop, another SLA, another scaling surface, and one more place where raw features must remain perfectly aligned.

This is why I think the case for PyFlink should be stated more bluntly than it usually is:

If the real source of friction in your system is that your training, feature logic, and model-adjacent code live naturally in Python, then "just use Java Flink" is not a neutral suggestion.

It is an architectural trade, and often an expensive one.

That is the real driver for PyFlink adoption.

I went back to an older PyFlink review recently because I did not want to turn one painful period into a permanent opinion. Some of those frustrations had aged well. Some had not. And PyFlink is exactly the kind of technology people form a durable opinion about after one painful quarter and then never revisit.

That would have been lazy here, because the story has moved. PyFlink is in a better place now than many engineers assume. The official docs cover installation, packaging Python environments, debugging, a Python DataStream API, and connector examples. That is already a more serious platform story than the older dismissive take that it is simply immature.

But the core trade-off has not disappeared.

PyFlink is now real enough to take seriously, but it still does not let you forget that Flink is fundamentally a JVM-first distributed runtime. That is the part people need to hold in their head at the same time as the improvements.

What Has Improved Since The Older Evaluation

The first thing worth saying is that some of the older criticisms are now too blunt.

PyFlink is no longer just a thin curiosity around the Table API. The current docs cover installation, a Python DataStream API, debugging, dependency management, packaging Python environments for cluster execution, and connector examples:

That is already a materially better story than the one many engineers still carry around in their heads.

A few concrete improvements stand out:

1. The Python Story Is Better Documented

The installation docs now state clear Python version requirements. At the time of writing, PyFlink requires Python 3.9, 3.10, 3.11 or 3.12:

That sounds minor, but it is not. One of the easiest ways to waste time with cross-language frameworks is by discovering environment assumptions too late. The current docs at least acknowledge that this is a real part of the user experience.

2. The DataStream Story Is No Longer Hand-Wavy

One of the old reasons people dismissed PyFlink was that serious low-level streaming work still felt like Java territory.

That is less true now. The Python DataStream API is documented, examples exist, and the API surface is real enough that you can reason about it as a deliberate part of the platform rather than a side alley:

I would still be careful not to confuse “documented” with “equally frictionless as the JVM path,” but the old complaint that PyFlink is barely there is no longer a fair description.

3. Debugging And Packaging Are Better Acknowledged

The older review spent a lot of energy on setup, environment pain, and debugging awkwardness.

Those pains have not disappeared, but the current docs are more honest about them. They cover packaging Python environments, adding JARs, client-side versus TaskManager-side logging, local debugging, remote debugging, and profiling:

This matters because it tells you something important about the maturity of the ecosystem: it now documents the pain instead of pretending it is not there.

That is progress, even if it is not magic.

Why PyFlink Is Genuinely Attractive

Despite the caveats, I do think PyFlink has a very real value proposition.

1. It Keeps The Streaming Layer Closer To The Actual ML Ecosystem

This is the point I think most comparisons understate, and it is the one that matters most to me.

The strongest argument for PyFlink is not merely “our team prefers Python.” The stronger argument is that the surrounding model ecosystem, experimentation culture, libraries, and iteration loops are still centered on Python.

That matters when the alternative is forcing teams into one of these patterns:

- re-implementing logic in Java

- exporting models through formats like ONNX and accepting the translation burden

- splitting the system so aggressively that the serving boundary becomes the architecture

None of these are invalid. But all of them are real costs, and in many teams they are the actual costs driving interest in PyFlink.

If the same raw features are calculated in one language for training and another for live prediction, you do not just inherit maintenance overhead. You inherit doubt. When a prediction looks wrong, is the model wrong, is the data wrong, or did one side normalise, round, parse, or order something differently? That uncertainty is corrosive, and it slows every feedback loop around the system.

2. It Meets Python-Heavy Teams Where They Already Work

If your data and ML teams already live in Python, PyFlink reduces one major source of organisational friction.

That does not mean everyone suddenly gets to ignore distributed systems. But it does mean:

- feature logic can stay closer to the surrounding Python estate

- model-adjacent transformations feel more natural

- experimentation paths from notebook thinking to streaming execution become less culturally awkward

For some organisations, that is a very big deal.

The wrong reaction here is to sneer and say “just learn Java.” Sometimes that is the right answer. Often it is just a lazy one.

3. It Makes Flink More Reachable Without Hiding Flink

Good language bindings should not pretend the platform underneath does not exist.

PyFlink is useful when it gives Python teams access to Flink’s real strengths: state, checkpoints, event-time semantics, long-running streaming jobs, and broader dataflow capabilities. If that is what you are buying, then the Python layer can be a practical bridge.

That is especially true for teams whose work already mixes ETL, feature pipelines, and model-centric logic.

4. There Is A Real Connector Surface

This is another place where the older blanket criticism needs updating.

The current PyFlink docs and examples do show Kafka, Pulsar, and Elasticsearch examples in Python:

So it would be wrong to say that the connector story is absent.

But it would also be wrong to say that it feels like a pure Python ecosystem.

That brings me to the real downside.

Why PyFlink Is Still Not “Flink, But Easy”

The strongest criticism from the old evaluation still holds:

PyFlink reduces language friction, but it does not remove runtime friction.

1. You Still Have To Think In Two Worlds

The installation and FAQ pages make this clear if you read them carefully.

You have to think about:

- Python interpreter version

- Python packaging and archives

- where Python executes

- how dependencies are shipped

- JAR dependencies for connectors or Java-side integration

That earlier review made this painfully concrete. Getting local execution into a sane state meant lining up:

- the right Java version

- the right Python version

- the right connector JARs

- the right Python dependencies

That list is not just setup trivia. It is the operating model announcing itself early.

That is not a small footnote. It is the day-to-day ergonomics of the platform:

This is why I would resist overselling PyFlink to a Python team as “just write Python and the rest disappears.”

It does not disappear.

It relocates.

2. The Connector Story Still Leaks JVM Reality

The connector examples are useful, but they also reveal the real shape of things: adding JARs, managing connector dependencies, and living with the fact that some integration points are still fundamentally JVM-shaped.

Even the current Kafka connector docs explicitly talk about bringing connector dependencies yourself for PyFlink jobs:

That is not a deal-breaker. It is just not the same experience as working inside a native Python framework whose extension model is Python all the way down.

It also shows up in deployment. In that earlier review, the easiest workable path for local standalone deployment was not “package a Python app and run it.” It was closer to:

- start from a vanilla Flink image

- add the Python dependencies

- mount the repo or bundle the code carefully

- run the Python entrypoint from inside the live container

That is a perfectly workable path. It is also a strong reminder that the deployment experience is still shaped by Flink’s runtime model, not by Python’s usual ergonomics.

3. Debugging Still Tells You What The System Really Is

The current debugging docs are better than before, but they are also revealing.

They distinguish between client-side logging and TaskManager-side logging. They discuss local debug, remote debug, and profiling Python UDFs. That is helpful, but it also tells you that when things go wrong, you are not debugging a simple Python program. You are debugging Python inside a distributed Flink runtime:

In practice, that means some classes of issue still feel cross-boundary by nature:

- packaging bugs

- dependency mismatches

- behavioural differences between local and cluster execution

- performance bottlenecks around Python execution paths

This is not PyFlink being uniquely bad. It is just the cost of the abstraction being honest.

4. Native Python Models Are Not An Automatic Architectural Win

This was one of the more useful parts of the earlier review, because it is exactly the kind of point people skip when they are trying to justify a new stack.

Yes, being able to interact with model code directly inside a PyFlink job is a real plus. It can simplify some flows and avoid a network hop.

But that is not the same as saying it is always the better architecture.

Once the model is served behind a proper boundary, you often gain things that matter a lot in production:

- safer zero-downtime upgrades

- cleaner readiness and health semantics

- independent model scaling behind a load balancer

- a clearer separation between streaming orchestration and serving concerns

So, yes, native execution can save some overhead. But it can also collapse boundaries that were doing useful work for you.

The reason I still take the native path seriously is not hand-wavy elegance. It is that model-as-a-service also comes with a bill:

- every prediction path now pays a network round trip

- the serving tier becomes another system you need to scale for throughput and protect with its own SLA

- raw feature generation has to stay perfectly aligned across the caller and the served model boundary

If demand is modest, teams can live with that for a long time. Once prediction volume rises, that architecture stops being an abstract diagram and starts showing up as latency, capacity planning, and operational drag.

5. The Performance Question Never Fully Goes Away

I would be very careful here not to pretend a benchmark I have not run.

But I am comfortable saying something narrower and more useful: if your workload is highly latency-sensitive, connector-heavy, or operationally unforgiving, the JVM path still deserves to be the default starting point.

PyFlink can absolutely be the right choice. I just would not choose it because I wanted to avoid understanding the Java side of Flink.

That is not how this platform works.

So When Would I Use It?

I would take PyFlink seriously when these conditions hold:

- the team is materially more fluent in Python than in Java

- the reason for adopting Flink is the runtime model, not fashion

- the jobs are important, but not balanced on the sharpest latency edge

- I am willing to own environment packaging and connector dependency management as part of the operating model

I would lean back toward Java Flink when:

- connector maturity dominates the problem

- the hot path is extremely performance-sensitive

- the team already has strong JVM strength

- I expect deep platform integration and want the least surprising execution path

If You Want To Try It

If this post pushed you toward experimenting rather than debating in the abstract, I put together a small starter page here:

It is intentionally minimal. The goal is not to hand you a grand framework. The goal is to give you a sensible first project shape and an agent prompt that can get a small Python-first streaming scaffold off the ground without immediate chaos.

The Practical Takeaway

What matters here is not whether PyFlink is “good” or “bad.”

That is far too vague to help anyone.

The better question is this:

Do I want Python as the working language for a Flink system badly enough to own the extra operational boundary that comes with it?

If the answer is yes, PyFlink is now mature enough to be a serious option.

If the answer is no, then Java Flink is still the cleaner way to get the full benefits of Flink without pretending the JVM underneath is someone else’s problem.

That, at least, is the view I would hold today.